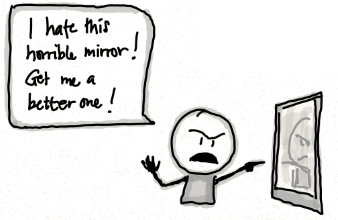

“The truth will set you free, but first it will make you miserable.”

It’s late at night. You shuffle to the kitchen for a snack. Your hand fumbles briefly for the light switch, and… roaches! They quickly scatter, but now you’ve seen them. You know they’re there. From now on, you can’t not think about the roaches in the kitchen. It’s a shame, too, because you always thought of yourself as a neat person with a clean kitchen. But now that image has been ruined. The good news is, now you have better information about the world. You DO have roaches in your kitchen, and you can start to do something about it.

The Roach Reveal story is how I think about one of our modern patterns of experience: everything is getting better and worse at the same time. These days we’re getting ever more powerful sensors that show us the virtual vermin that lurk beneath the veneers of civilization. We’re seeing roaches we never knew were there. It feels like we’re being hammered endlessly by terrible news. But look closer: the roaches were always there. And now we can start to do something about it. And often it’s only when we realize just how bad it is that we finally decide to take action.

Related to this topic, I want to convince you to read The Alignment Problem by Brian Christian. This book addresses the dangers associated with machine learning, smart robots, and clever algorithms of all kinds. The title refers to a subtle and disturbing fact: it’s strangely difficult to tell a computer what you want it to do. You can tell it to do something that is what you THINK you want it to do. And then it will, with dizzying speed and precision, set about making you miserable, all while doing exactly what you asked. This is often described in terms of deal-with-the-devil jokes: tell the computer to stop people from getting malaria, and it will murder everyone before they can catch malaria. “I solved your problem!” says the robot. “You killed my family!” says the programmer. That’s the alignment problem. It’s not a joke.

One of the topics in the book is algorithmic bias. Suppose you want to teach a robot to hire good employees. Or decide who should get a loan. Or maybe decide who should be paroled from jail. After you implement some pretty straightforward machine learning, you are almost certain to be disturbed by the results. The computer has learned from a horribly biased society. What else could it learn from? Naturally it mirrors back to us racism, sexism, and xenophobia.

Machine learning is the kitchen light. It’s illuminating the roaches that have been crawling through our brains and institutions for hundreds of years. Switching on these algorithms feels like a massive step backwards. We are in danger of encoding extraordinarily efficient prejudice. But the book comes with good news too. No matter how unhappy the kitchen light first makes you, it will also help you solve the problem. Seeing how biased our algorithms are, we can set about attacking the root cause. The root cause is not the kitchen light.

Our computers can teach us to be better humans.